Smart Code Start 655cf838c4da2 Revealing Digital Token Analysis

Smart Code Start 655cf838c4da2 reveals a disciplined framework for digital token analysis. It couples on-chain scrutiny with data-driven insights, mapping supply dynamics, liquidity depth, staking, and governance signals to price movements. The approach emphasizes transparent methodologies, reproducible results, and robust validation. Sensitivity tests and external benchmarks anchor findings in credibility. The discussion leaves a practical, trackable path forward, inviting consideration of how observable metrics drive long-term token sustainability and risk assessment.

What Is Digital Token Analysis and Why It Matters

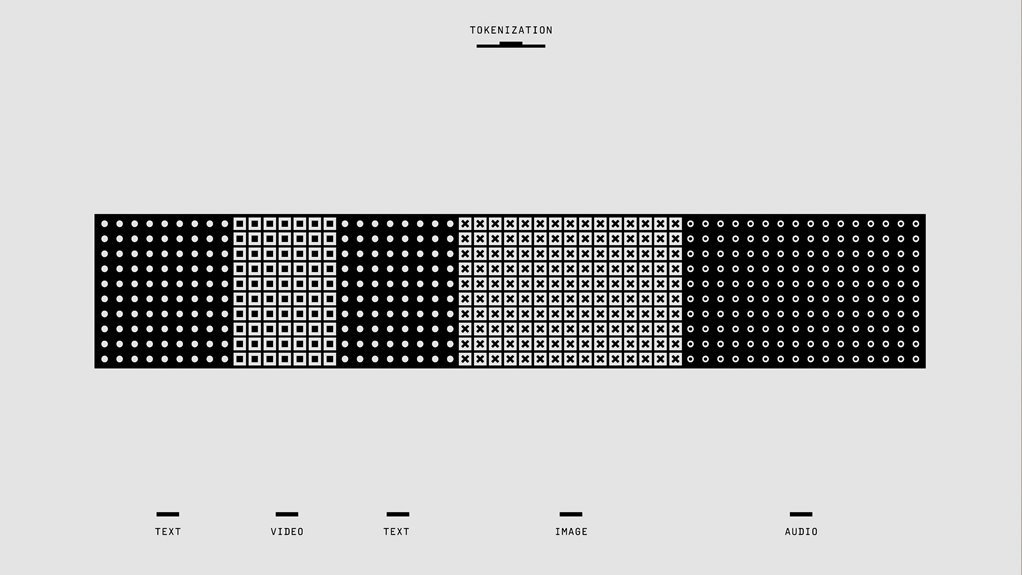

Digital token analysis is the systematic evaluation of blockchain-based tokens to understand their behavior, value drivers, and risk profiles. It distills data into actionable insights about token distribution, liquidity pools, price signals, and on chain metrics. This approach enables informed decisions, highlights exposure, and clarifies how network activity aligns with governance, utility, and potential long-term sustainability.

How Tokenomics Shapes Price and Risk in Real Time

Tokenomics exerts an immediate, quantifiable influence on price and risk by mapping token supply dynamics, distribution patterns, and incentive structures to observable market signals.

The analysis highlights tokenomics dynamics as core drivers, linking issuance, burn rates, staking yields, and liquidity to price volatility.

Risk forecasting emerges from correlating macro factors with on-chain metrics, enabling disciplined, data-driven assessments of potential downside scenarios.

Practical Data-Driven Methods for Forecasting Token Trends

Practical data-driven forecasting of token trends relies on a structured, multi-m actor approach that integrates on-chain metrics, market microstructure, and macro indicators. The method emphasizes observable signals rather than narratives, combining token liquidity, governance signals, and staking dynamics to calibrate risk and reward. Price resilience emerges from liquidity depth, while governance signals shape actionable forward-looking expectations within disciplined, data-focused frameworks.

Common Pitfalls and How to Validate Your Token Analyses

The previous discussion on data-driven forecasting provides a solid foundation for evaluating token analyses, but practical application requires attention to common pitfalls and robust validation.

The analysis identifies data quality issues, overfitting risks, and misinterpreted signals, emphasizing bias detection and external benchmarking.

Transparent methodology, reproducible results, and sensitivity testing ensure credible conclusions and maintain analytical freedom for informed decision-makers.

Conclusion

Digital token analysis blends transparent methodologies with real-time data to reveal price drivers and risk. By mapping supply shifts, liquidity depth, staking, and governance signals to price dynamics, it enables disciplined forecasting and robust validation. Example: a hypothetical governance-led burn reducing circulating supply, paired with deeper liquidity, could precede a measurable price uptick confirmed by sensitivity tests and external benchmarking. Such data-driven rigor helps separate narratives from observable signals, supporting sustainable, credible decision-making in token markets.