Neural Beam 935491424 Apex Node

The Neural Beam 935491424 Apex Node envisions a distributed, edge-centric framework for real-time AI inference. It combines neural processing with hierarchical, fabric-based partitioning to minimize data movement and preserve determinism. The approach aims for low latency and consistent throughput across localized domains, while addressing deployment trade-offs and observability. Its strategic emphasis on modular subtasks invites scrutiny of performance guarantees and resilience, leaving important questions about integration and operational controls unresolved as the discussion advances.

What Is Neural Beam 935491424 Apex Node and Why It Matters?

Neural Beam 935491424 Apex Node is a theoretical construct bridging advanced neural processing with edge-computing capabilities, designed to streamline real-time decision-making in complex systems.

It defines a framework where neural beam strategies power systematic edge inference for constrained environments.

The apex node enables scalable management of real time workloads, emphasizing autonomy, reliability, and freedom to operate at the network edge.

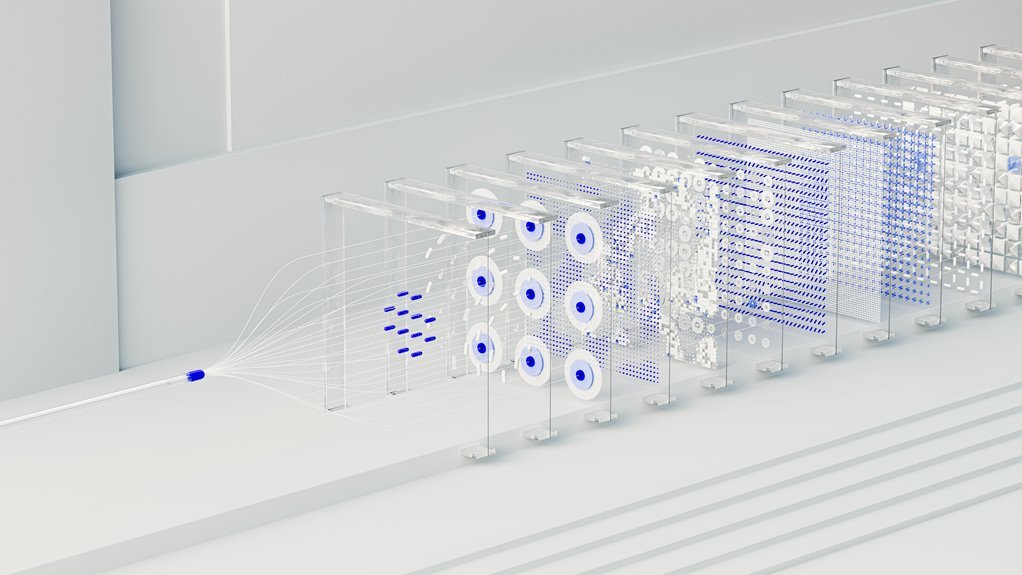

How the Apex Node Architecture Accelerates AI Inference at the Edge

The Apex Node architecture accelerates AI inference at the edge by distributing processing across a hierarchical, low-latency fabric that minimizes data movement and maximizes locality. It achieves reduced edge latency through collaborative execution and smart data locality.

Model partitioning enables scalable concurrency, enabling each node to handle specialized subtasks, optimizing bandwidth, and preserving determinism for edge deployments without centralized bottlenecks.

Real-Time Workloads and Performance Benchmarks You Can Trust

Real-time workloads on the Apex Node are evaluated through standardized benchmarks that emphasize low latency, deterministic scheduling, and consistent throughput under varied edge conditions. The assessment emphasizes Edge to Edge transitions and stable performance across heterogeneous networks.

Latency Profiling reveals predictable response times, enabling capacity planning and reliability guarantees, while benchmark results remain transparent, repeatable, and interpretable for operators prioritizing freedom and trust.

Deployment Considerations, Trade-Offs, and Best Practices

Deployment considerations for the Apex Node balance architectural constraints, network heterogeneity, and operational risk to determine optimal deployment models.

The analysis identifies critical trade offs between latency, resilience, and cost, guiding configuration choices for real time workloads.

Best practices emphasize modular deployment, observability, and standardized runbooks.

Freedom-minded teams should prioritize scalable, secure architectures that sustain predictable performance under diverse conditions.

Conclusion

The Neural Beam 935491424 Apex Node represents a decisive leap in edge AI, delivering deterministic, low-latency inference through hierarchical, fabric-based partitioning that minimizes data movement. Its architecture blends locality-aware processing with modular deployment, enabling scalable real-time decision-making across distributed systems. While trade-offs in resource planning exist, the approach delivers measurable gains in throughput and latency, supported by rigorous benchmarks and standardized runbooks. In short, this node could redefine edge intelligence—an industry game-changer on a global scale.